Click the link to read the article on The Land Desk website (Jonathan P. Thompson):

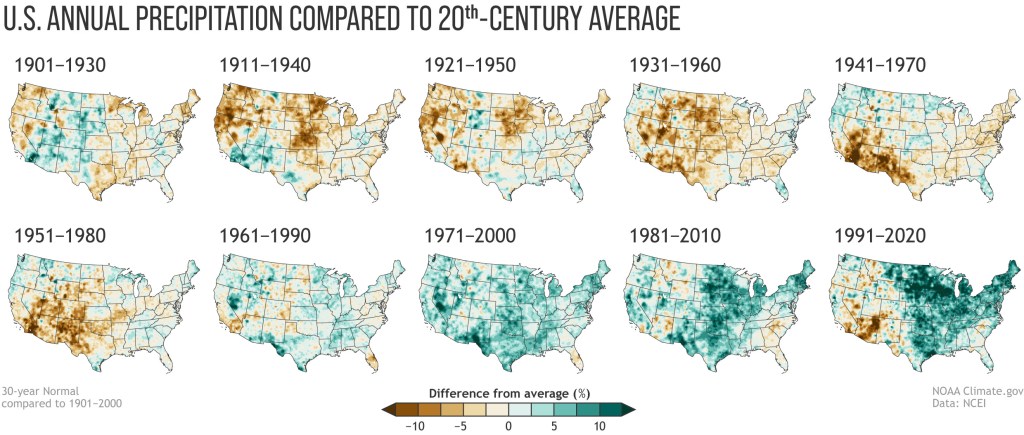

Now that the 2022-23 meteorological winter is over, it’s safe to say that it was an abnormally wet and snowy winter. Some places in the Sierra Nevada, for example, have seen two to four times the normal amount of snow.

Even southern Californians grappled with blizzards (not normal) and an avalanche was triggered on Mount San Jacinto near Palm Springs (not normal). Silverton, Colorado, was buried in several feet of snow, suffered an hours-long power outage, and was isolated from the outside world when the passes in both directions closed due to avalanches. Two skiers were killed by an avalanche near Vallecito Reservoir in southwestern Colorado. The tragedy was made all the more shocking by the relatively low-elevation (8,400 feet) at which the accident occurred, in a place that normally doesn’t receive enough snow to create significant avalanche hazards.

You may have noticed that I used the term “normal” several times in the preceding paragraphs. But what does normal really mean? It seems so subjective, a somewhat derogatory descriptor of something boring. And yet, meteorologists and climate scientists use the term all the time to let us know whether a winter’s snowfall or temperatures fall within an average (or median) historic range or not.

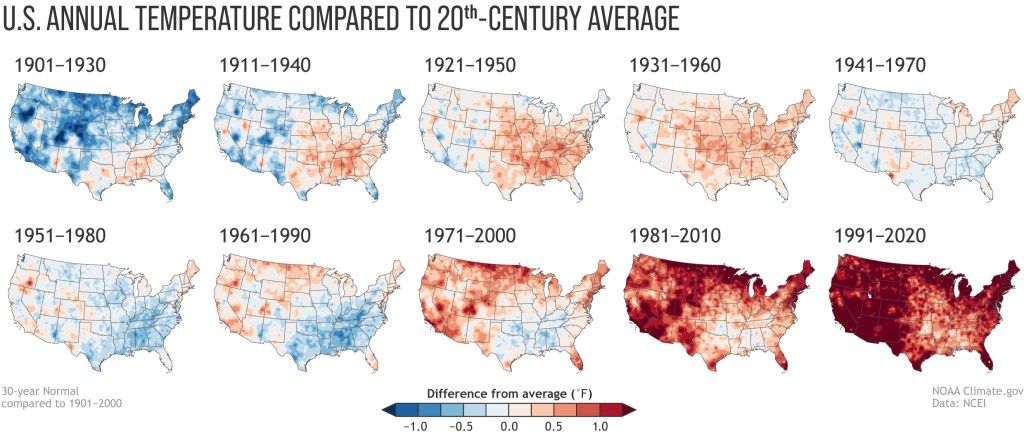

That makes sense. But weirdly, the National Oceanic and Atmospheric Administration determines its normals by looking only at the most recent three decades. So, for example, if one were to say the snow water equivalent at the Spratt Creek SNOTEL station in northeastern California is currently 450% of normal (the actual reading on March 3), they would mean that it’s four and a half times greater than the 1991-2020 median…

That, understandably, irks some folks, since it seems like a rather short period of time to use to define “normal.” Also, if those three decades were, say, drier than the decades that preceded them, wouldn’t that skew things? Why not go back to the beginning of record keeping? The National Weather Service has an answer: the criteria was chosen by the international meteorological community in the 1930s because many countries didn’t have reliable record keeping prior to 1900. So, the thirty-year standard was a bit of an accident of timing, and it stuck.

Which is fine and good but it still leaves me feeling empty, kind of like when I eat a Blake’s Lotaburger and they forget to add the green chile. It just seems far more valuable to be able to compare current conditions to as deep a historic record as possible. The good news is, in addition to using the 30-year normal, NOAA also tracks the 20th century averages, so we can at least easily compare the new normal to the old and compare both to the 20th century average.